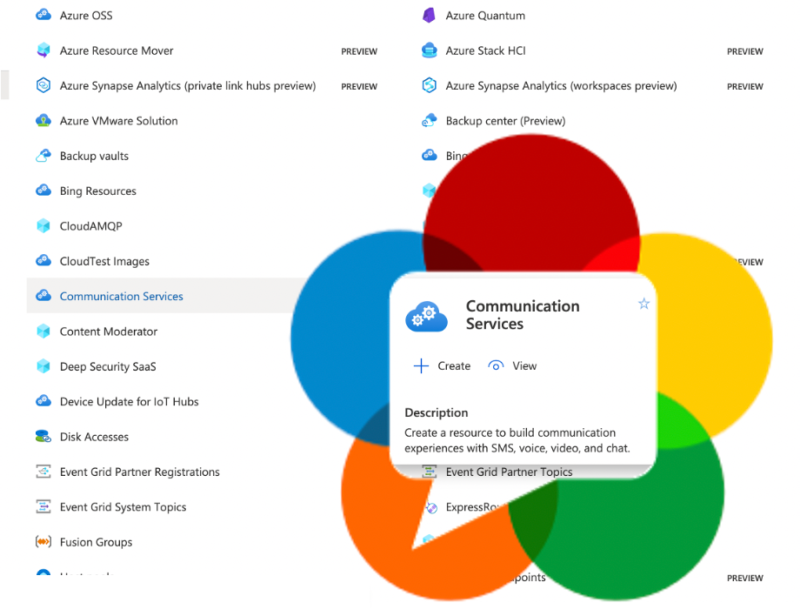

Walkthrough and deep analysis of how Azure Communications Service makes use of WebRTC by Gustavo Garcia

SDP

RED: Improving Audio Quality with Redundancy

Back in April 2020 a Citizenlab reported on Zoom’s rather weak encryption and stated that Zoom uses the SILK codec for audio. Sadly, the article did not contain the raw data to validate that and let me look at it further. Thankfully Natalie Silvanovich from Googles Project Zero helped me out using the Frida tracing […]

Not a Guide to SDP Munging

SDP has been a frequent topic, both here on webrtcHacks as well as in the discussion about the standard itself. Modifying the SDP in arcane ways is referred to as SDP munging. This post gives an introduction into what SDP munging is, why its done and why it should not be done. This is not […]

Is everyone switching to Unified Plan?

Review of Chrome’s migration to WebRTC’s Unified Plan, how false metrics may have misguided this effort, and what that means moving forward.

A playground for Simulcast without an SFU

Simulcast is one of the more interesting aspects of WebRTC for multiparty conferencing. In a nutshell, it means sending three different resolution (spatial scalability) and different frame rates (temporal scalability) at the same time. Oscar Divorra’s post contains the full details. Usually, one needs a SFU to take advantage of simulcast. But there is a […]