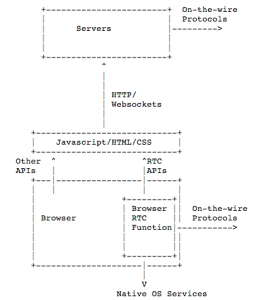

As I described in the standardization post, the model used in WebRTC for real-time, browser-based applications does not envision that the browser will contain all the functions needed to function as a telephone or video conferencing unit. Instead, is specifies the browser will contain the functions that are needed to run a Web application which would […]