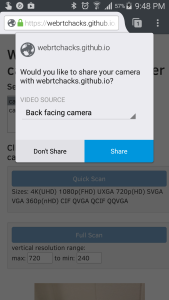

Back in October 2013, the relative early days of WebRTC, I set out to get a better understanding of the getUserMedia API and camera constraints in one of my first and most popular posts. I discovered that working with getUserMedia constraints was not all that straight forward. A year later I gave an update after the […]