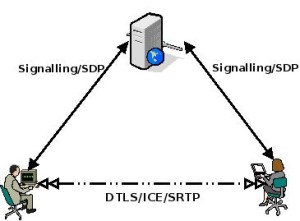

WebRTC promises to greatly simplify the development of multimedia realtime communications, without the need to install an application or browser plug-in. It enables this by exposing a media engine and the network stack through a set of specialised APIs. Application developers can use these APIs to easily add realtime communication to web applications. The defined […]

The IMS approach to WebRTC

The first post we published on webrtcHacks was ‘A Hitchhiker’s Guide to WebRTC standardization’ (July 2013) where we gave some initial insight on activities in the 3GPP around WebRTC and IMS. Since then the situation has certainly evolved (well, probably not as fast as some would have expected). Since we regularly receive emails asking about […]

What is a WebRTC Gateway anyway? (Lorenzo Miniero)

As I mentioned in my ‘WebRTC meets telecom’ article a couple of weeks ago, at Quobis we’re currently involved in 30+ WebRTC field trials/POCs which involve in one way or another a telco network. In most cases service providers are trying to provide WebRTC-based access to their existing/legacy infrastructure and services (fortunately, in some cases it’s […]

webrtcHacks Meetup at Mobile World Congress (MWC)

Next week Mobile World Congress (MWC) 2014 will take place in Barcelona, Spain. Since Barcelona is my hometown, it’s always a great opportunity to meet with industry friends and enjoy some local spots together. Many webrtcHackers will be in Barcelona for the event, so we are organizing a meetup next Tuesday at 6PM CET. This event will […]

WebRTC beyond one-to-one communication (Gustavo Garcia Bernardo)

WebRTC and its peer-to-peer capabilities are great for one-to-one communications. However, when I discuss with customers use cases and services that go beyond one-to-one, namely one-to-many or many-to-many, the question arises: “OK, but what architecture shall I use for this?”. Some service providers want to reuse the multicast support they have in their networks (we […]